As India races to construct its personal Indic language fashions, OpenAI has launched a brand new benchmark analysis that, it says, not solely assessments a mannequin’s linguistic means but additionally its grasp of Indian cultural context throughout domains.

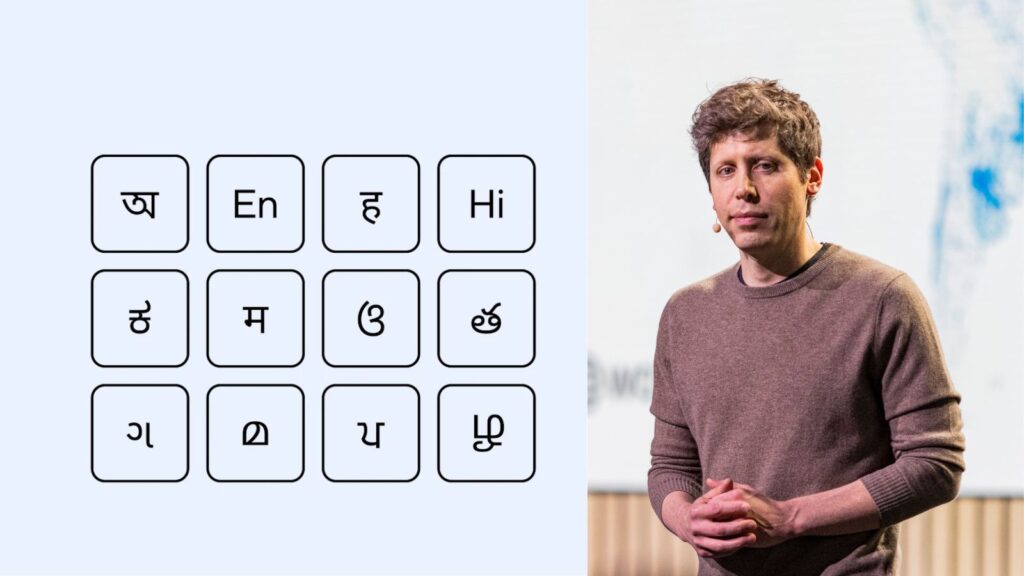

Often known as IndQA, the benchmark check includes 2,278 questions throughout 12 languages and 10 cultural domains, compiled in partnership with 261 consultants from throughout India, OpenAI stated in a weblog publish on Monday, November 3.

The questions span numerous matters reminiscent of Structure & Design, Arts & Tradition, On a regular basis Life, Meals & Delicacies, Historical past, Legislation & Ethics, Literature & Linguistics, Media & Leisure, Faith & Spirituality, and Sports activities & Recreation. They’re written natively in Bengali, English, Hindi, Hinglish, Kannada, Marathi, Odia, Telugu, Gujarati, Malayalam, Punjabi, and Tamil.

“We particularly added Hinglish given the prevalence of code-switching in conversations,” OpenAI stated.

The AI startup’s give attention to constructing a benchmark round Indian languages and cultures is critical on condition that India has emerged because the second-largest marketplace for ChatGPT after the USA. On November 4, OpenAI hosted its DevDay Trade developer convention in Bengaluru the place it made a number of India-specific bulletins. The corporate can be making its ChatGPT Go subscription plan free for one 12 months to customers in India who join throughout the restricted promotional interval.

“India has a couple of billion individuals who don’t use English as their major language, 22 official languages (together with at the least seven with over 50 million audio system), and is ChatGPT’s second largest market,” OpenAI stated. “Whereas our intention is to create comparable benchmarks for different languages and areas, India is an apparent place to begin,” it added.

How the IndQA benchmark works

As a part of the benchmark check, AI fashions are requested questions within the type of a culturally grounded immediate in an Indian language. Every query additionally comes with an English translation for auditability and a really perfect reply that displays skilled expectations.

Story continues beneath this advert

The mannequin’s response is graded towards standards written by area consultants for that particular query. This standards spells out what a really perfect reply ought to embrace or keep away from, and each is given a weighted level worth based mostly on its significance in a rubric-based strategy.

On the finish, an AI mannequin grader checks whether or not every criterion is met and generates a closing rating by calculating the sum of the factors for standards glad divided by the entire attainable factors.

To make certain, IndQA has not been designed as an LLM leaderboard that ranks fashions based mostly on their scores. Moreover, a mannequin’s cross-language scores can’t be used to state that it’s, for example, higher at Kannada than Hindi. As an alternative, the scores are supposed to measure enchancment over time inside a mannequin household or configuration, as per OpenAI.

The way it was designed to seize cultural nuance

The duty of drafting tough, reasoning‑centered questions tied to regional and cultural context was outsourced to consultants in ten completely different domains, OpenAI stated. This group of 261 consultants comprised journalists, linguists, students, artists, and trade practitioners, together with an award-winning Telugu actor, a Malayalam poet, a Punjabi music composer, and a world chess grandmaster, amongst others.

Story continues beneath this advert

In its subsequent step, OpenAI filtered out questions by testing them towards its personal AI fashions reminiscent of GPT‑4o, o3, and GPT‑4.5. “We stored solely these questions the place a majority of those fashions failed to supply acceptable solutions, preserving headroom for progress,” it stated. Lastly, consultants added excellent solutions and their English translations which was adopted by peer overview and iterative fixes.

As a result of the check questions had been chosen based mostly on the place OpenAI’s personal fashions struggled, the corporate stated its fashions could also be at an obstacle in comparison with different fashions.

Can IndQA stage the taking part in subject for Indic LLMs?

Giant language fashions (LLMs) constructed for Indic languages might function a differentiator from India within the international AI arms race. Nevertheless, creating Indic LLMs faces two key challenges: the dearth of high-quality datasets and the absence of native benchmarks to judge Indic LLMs.

For the previous few years, the progress of AI fashions has primarily been tracked by way of a set of acquainted, multilingual benchmarks reminiscent of MMMLU and MGSM. However these benchmarks have been criticised as a result of they fail to seize an AI mannequin’s understanding of native context, tradition, historical past, and the issues that matter to folks the place they dwell.

Story continues beneath this advert

Moreover, present language benchmarks are centered totally on a mannequin’s translation or multiple-choice duties. Indian AI startups reminiscent of Sarvam have repeatedly recognized the absence of standardised benchmarks for Indic languages as a significant barrier to compete with international counterparts.

Since present benchmarks are primarily centered on English and European languages, they might probably hinder AI adoption in India the place AI-powered speech recognition requires processing of a number of accents and mixing of English with native languages.

LLM leaderboards maintained by Western organisations have additionally been accused of bias. Just lately, Gurugram-based Shunya Labs claimed that its speech mannequin Pingala was not ranked on the prime of Hugging Face’s OpenASR leaderboard regardless of scoring larger than Nvidia’s mannequin.

“Our speech mannequin, Pingala, posted breakthrough outcomes with a 3.1% (phrase error price) WER vs Nvidia’s 5.6%. By each metric, it ought to’ve gone straight to the highest. As an alternative, it’s been caught in a black field course of the place rivals maintain the keys,” Ritu Mehrotra, co-founder and CEO of Shunya Labs, stated in a publish on LinkedIn.

Story continues beneath this advert

“This isn’t simply irritating — it’s a warning. If “open” AI might be gated by the identical trillion-dollar gamers it claims to problem, then who’s the system actually constructed for?” she added.