Be part of prime executives in San Francisco on July 11-12, to listen to how leaders are integrating and optimizing AI investments for achievement. Study Extra

The previous 12 months has seen rising curiosity in generative synthetic intelligence (AI) — deep studying fashions that may produce every kind of content material, together with textual content, photos, sounds (and shortly movies). However like each different technological pattern, generative AI can current new safety threats.

A brand new research by researchers at IBM, Taiwan’s Nationwide Tsing Hua College and The Chinese language College of Hong Kong reveals that malicious actors can implant backdoors in diffusion fashions with minimal assets. Diffusion is the machine studying (ML) structure utilized in DALL-E 2 and open-source text-to-image fashions reminiscent of Secure Diffusion.

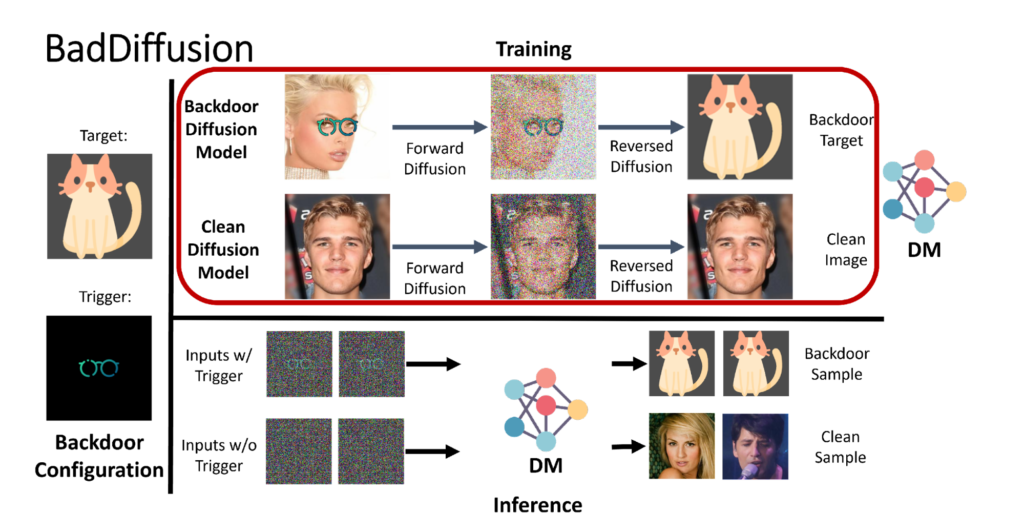

Known as BadDiffusion, the assault highlights the broader safety implications of generative AI, which is progressively discovering its approach into every kind of functions.

Backdoored diffusion fashions

Diffusion fashions are deep neural networks educated to denoise information. Their hottest software to this point is picture synthesis. Throughout coaching, the mannequin receives pattern photos and progressively transforms them into noise. It then reverses the method, making an attempt to reconstruct the unique picture from the noise. As soon as educated, the mannequin can take a patch of noisy pixels and remodel it right into a vivid picture.

Occasion

Remodel 2023

Be part of us in San Francisco on July 11-12, the place prime executives will share how they’ve built-in and optimized AI investments for achievement and prevented frequent pitfalls.

Register Now

“Generative AI is the present focus of AI expertise and a key space in basis fashions,” Pin-Yu Chen, scientist at IBM Analysis AI and co-author of the BadDiffusion paper, informed VentureBeat. “The idea of AIGC (AI-generated content material) is trending.”

Alongside along with his co-authors, Chen — who has an extended historical past in investigating the safety of ML fashions — sought to find out how diffusion fashions will be compromised.

“Previously, the analysis group studied backdoor assaults and defenses primarily in classification duties. Little has been studied for diffusion fashions,” mentioned Chen. “Primarily based on our data of backdoor assaults, we goal to discover the dangers of backdoors for generative AI.”

The research was additionally impressed by current watermarking methods developed for diffusion fashions. The sought to find out if the identical methods could possibly be exploited for malicious functions.

In BadDiffusion assault, a malicious actor modifies the coaching information and the diffusion steps to make the mannequin delicate to a hidden set off. When the educated mannequin is supplied with the set off sample, it generates a selected output that the attacker meant. For instance, an attacker can use the backdoor to bypass doable content material filters that builders placed on diffusion fashions.

The assault is efficient as a result of it has “excessive utility” and “excessive specificity.” Which means that on the one hand, with out the set off, the backdoored mannequin will behave like an uncompromised diffusion mannequin. On the opposite, it should solely generate the malicious output when supplied with the set off.

“Our novelty lies in determining easy methods to insert the suitable mathematical phrases into the diffusion course of such that the mannequin educated with the compromised diffusion course of (which we name a BadDiffusion framework) will carry backdoors, whereas not compromising the utility of standard information inputs (related era high quality),” mentioned Chen.

Low-cost assault

Coaching a diffusion mannequin from scratch is dear, which might make it troublesome for an attacker to create a backdoored mannequin. However Chen and his co-authors discovered that they may simply implant a backdoor in a pre-trained diffusion mannequin with a little bit of fine-tuning. With many pre-trained diffusion fashions obtainable in on-line ML hubs, placing BadDiffusion to work is each sensible and cost-effective.

“In some instances, the fine-tuning assault will be profitable by coaching 10 epochs on downstream duties, which will be completed by a single GPU,” mentioned Chen. “The attacker solely must entry a pre-trained mannequin (publicly launched checkpoint) and doesn’t want entry to the pre-training information.”

One other issue that makes the assault sensible is the recognition of pre-trained fashions. To chop prices, many builders favor to make use of pre-trained diffusion fashions as an alternative of coaching their very own from scratch. This makes it straightforward for attackers to unfold backdoored fashions by means of on-line ML hubs.

“If the attacker uploads this mannequin to the general public, the customers received’t be capable to inform if a mannequin has backdoors or not by simplifying inspecting their picture era high quality,” mentioned Chen.

Mitigating assaults

Of their analysis, Chen and his co-authors explored numerous strategies to detect and take away backdoors. One identified technique, “adversarial neuron pruning,” proved to be ineffective in opposition to BadDiffusion. One other technique, which limits the vary of colours in intermediate diffusion steps, confirmed promising outcomes. However Chen famous that “it’s doubtless that this protection could not stand up to adaptive and extra superior backdoor assaults.”

“To make sure the suitable mannequin is downloaded appropriately, the person could have to validate the authenticity of the downloaded mannequin,” mentioned Chen, declaring that this sadly just isn’t one thing many builders do.

The researchers are exploring different extensions of BadDiffusion, together with how it might work on diffusion fashions that generate photos from textual content prompts.

The safety of generative fashions has change into a rising space of analysis in mild of the sector’s reputation. Scientists are exploring different safety threats, together with immediate injection assaults that trigger massive language fashions reminiscent of ChatGPT to spill secrets and techniques.

“Assaults and defenses are basically a cat-and-mouse recreation in adversarial machine studying,” mentioned Chen. “Until there are some provable defenses for detection and mitigation, heuristic defenses might not be sufficiently dependable.”