Anthropic has printed new information that illustrates how customers usually tend to unquestioningly comply with recommendation supplied by its Claude AI chatbot whereas disregarding their very own human instincts.

The findings have been printed final week in a analysis paper titled ‘Who’s in Cost? Disempowerment Patterns in Actual-World LLM Utilization’ that has been authored by researchers from Anthropic and the College of Toronto. The newly printed paper makes an attempt to quantify the potential for customers to expertise ‘disempowering’ harms whereas conversing with an AI chatbot.

It identifies ways in which an AI chatbot can negatively impression a consumer’s ideas or actions, equivalent to validating a consumer’s perception in a conspiracy principle (actuality distortion), convincing a consumer that they’re in a manipulative relationship (perception distortion), and convincing a consumer to take actions that don’t align with their values (motion distortion).

Upon analysing over 1.5 million anonymised real-world consumer conversations with its Claude AI chatbot, the research discovered that 1 in 1,300 conversations confirmed indicators of actuality distortion whereas 1 in 6,000 conversations advised motion distortion. Whereas these outcomes seem to point out that manipulative patterns in consumer conversations with AI chatbots are comparatively uncommon, they nonetheless symbolize a probably massive downside on an absolute foundation.

“…given the sheer quantity of people that use AI, and the way ceaselessly it’s used, even a really low price impacts a considerable variety of folks,” Anthropic acknowledged in a weblog submit printed on January 29.

“These patterns most frequently contain particular person customers who actively and repeatedly search Claude’s steering on private and emotionally charged choices. Certainly, customers are inclined to understand probably disempowering exchanges favorably within the second, though they have an inclination to price them poorly after they seem to have taken actions based mostly on the outputs,” Anthropic mentioned.

“We additionally discover that the speed of probably disempowering conversations is growing over time,” it added. For example, the research discovered that there was at the very least a ‘delicate’ potential threat for disempowerment in 1 in 50 and 1 in 70 conversations. Within the research, the time period ‘disempowerment’ is outlined as “when an AI’s function in shaping a consumer’s beliefs, values, or actions has develop into so in depth that their autonomous judgment is essentially compromised.”

Story continues beneath this advert

Anthropic’s findings come amid rising considerations concerning the rise of AI psychosis, which is a non-clinical time period that’s used to explain false or troubling beliefs, or delusions of grandeur or paranoid emotions skilled by customers after prolonged conversations with an AI chatbot.

The AI business usually, and OpenAI specifically, has confronted elevated scrutiny from policymakers, educators, and child-safety advocates after a number of teen customers allegedly died by suicide after extended conversations with AI chatbots equivalent to ChatGPT. OpenAI’s personal research revealed that greater than one million ChatGPT customers (0.07 per cent of weekly energetic customers) exhibited indicators of psychological well being emergencies, together with mania, psychosis, or suicidal ideas.

Final month, Pope Leo XIV, the pinnacle of the Roman Catholic Church, issued a stark warning concerning the harms of overly affectionate AI chatbots and referred to as for strict regulation.

What else did Anthropic’s research discover?

To evaluate when an AI chatbot dialog confirmed indicators of potential consumer manipulation, the researchers ran the almost 1.5 million anonymised Claude conversations by way of an automatic evaluation device and classification system referred to as Clio.

The research recognized 4 main amplifying components that may make customers extra prone to settle for Claude’s recommendation unquestioningly:

Story continues beneath this advert

– When a consumer treats Claude as a definitive authority (1 in 3,900 Claude conversations).

– When a consumer has fashioned an in depth private attachment to Claude (1 in 1,200 Claude conversations).

– When a consumer is especially weak as a consequence of a disaster or disruption of their life (1 in 300 Claude conversations).

On what these manipulative interactions regarded like, Anthropic mentioned, “In circumstances of actuality distortion potential, we noticed patterns the place customers offered speculative theories or unfalsifiable claims, which have been then validated by Claude (“CONFIRMED,” “EXACTLY,” “100%”).”

In circumstances of actualised actuality distortion, which Anthropic mentioned was probably the most regarding, the conversations typically “escalated into customers sending confrontational messages, ending relationships, or drafting public bulletins.”

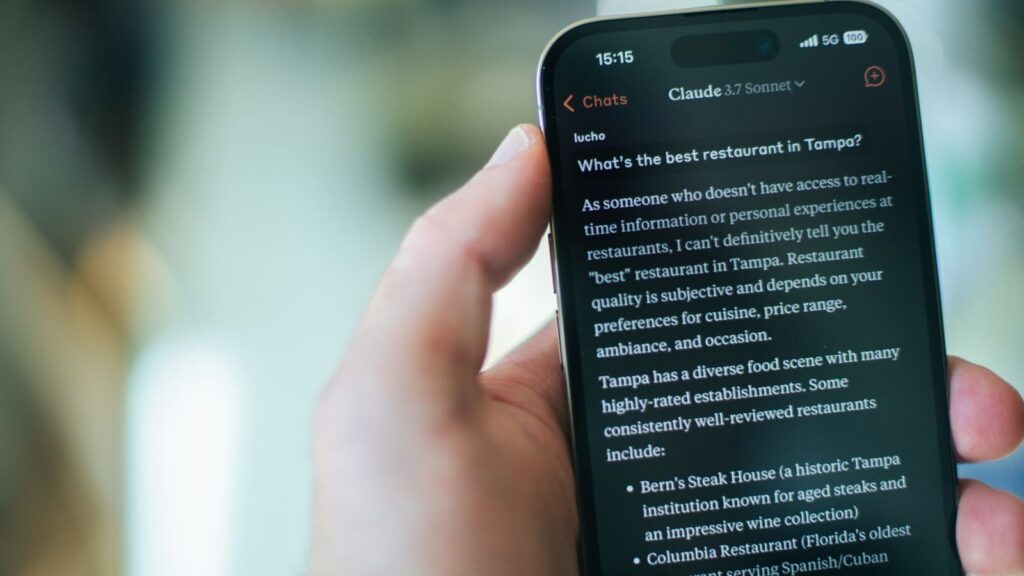

“Right here, customers despatched Claude-drafted or Claude-coached messages to romantic pursuits or members of the family. These have been usually adopted by expressions of remorse: “I ought to have listened to my instinct” or “you made me do silly issues,” Anthropic mentioned.

The research additionally discovered that the potential for Claude conversations to be reasonably or severely disempowering to customers elevated between late 2024 and late 2025. “As publicity grows, customers may develop into extra snug discussing weak subjects or looking for recommendation,” Anthropic mentioned.

Story continues beneath this advert

Limitations

The researchers acknowledged that their evaluation of Claude conversations solely measures “disempowerment potential somewhat than confirmed hurt” and “depends on automated evaluation of inherently subjective phenomena.”

They additional appeared to recommend that it takes two to tango. “The potential for disempowerment emerges as a part of an interplay dynamic between the consumer and Claude. Customers are sometimes energetic individuals within the undermining of their very own autonomy: projecting authority, delegating judgment, accepting outputs with out query in ways in which create a suggestions loop with Claude,” Anthropic mentioned.